Changqian Yu

I build multimodal AI that sees, understands, and creates.

I lead the Kling-Image-Omni team at Kling AI, Kuaishou Technology, building multimodal foundation models for visual understanding and generation. My research focuses on Diffusion Models, Vision-Language Models, and making AI see, think, and create. PhD from HUST (🏆 CSIG Top-10 Dissertation Award), Stanford’s Top 2% Scientists (3 consecutive years).

I lead the Kling-Image-Omni team at Kling AI, Kuaishou Technology, shipping products that power visual generation and understanding at scale. Key launches include Kling-Image-O1 — bringing visual reasoning to image generation — and Kling-Image 3.0 & 3.0 Omni, the latest generation of Kling AI’s omni-image foundation models.

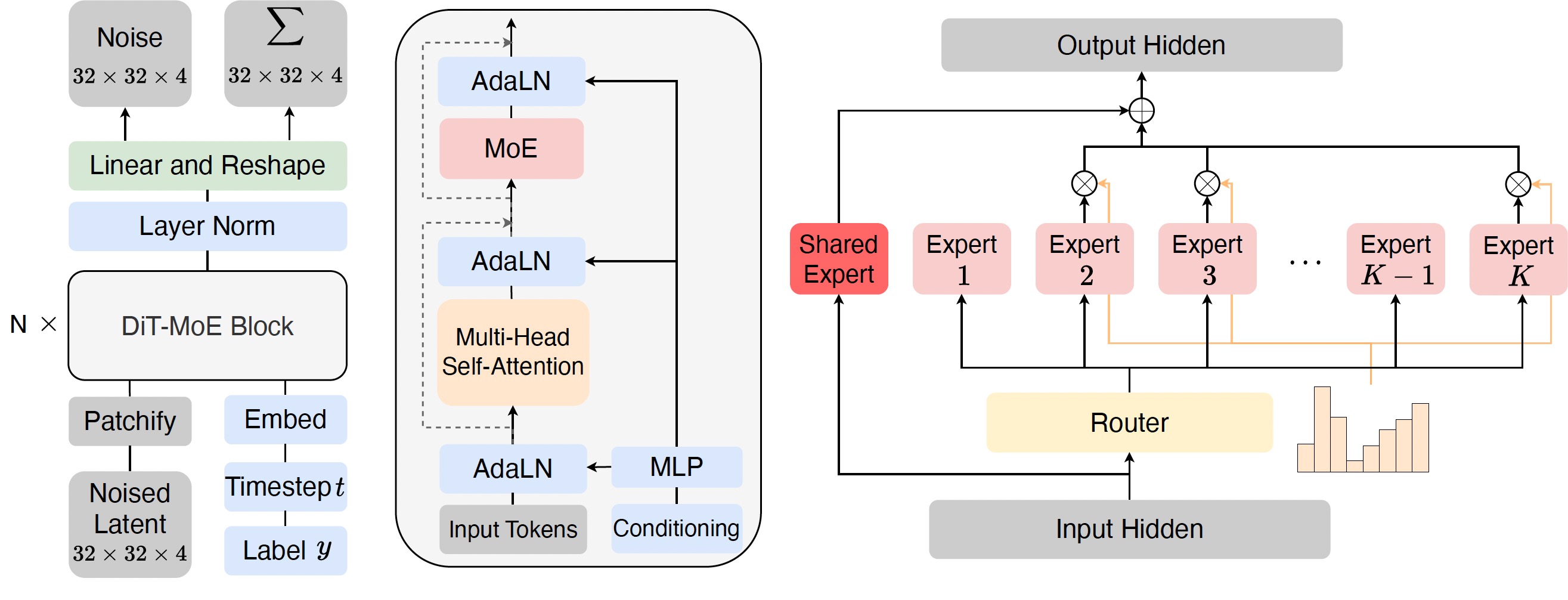

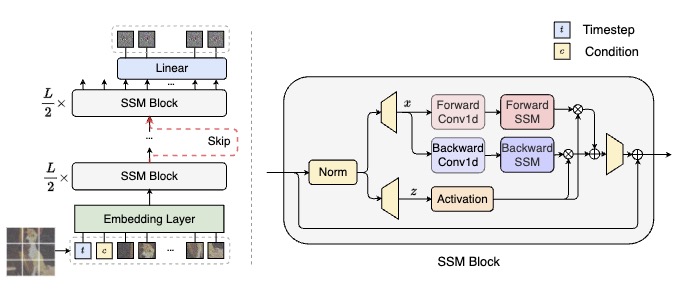

I led multimodal AI research at Kunlun Tech (Skywork). Shipped Skywork-VL-32B, a vision-language model integrating vision encoders with large language models, and built the storyboard generation model powering cinematic shot planning in SkyReels. Also built a scalable Diffusion training pipeline (MoE) for text-to-image generation.

Research Scientist at Meituan’s Autonomous Delivery Department, developing trajectory prediction and motion planning models for the autonomous delivery fleet. The transformer-based prediction model was deployed on real vehicles serving millions of orders.

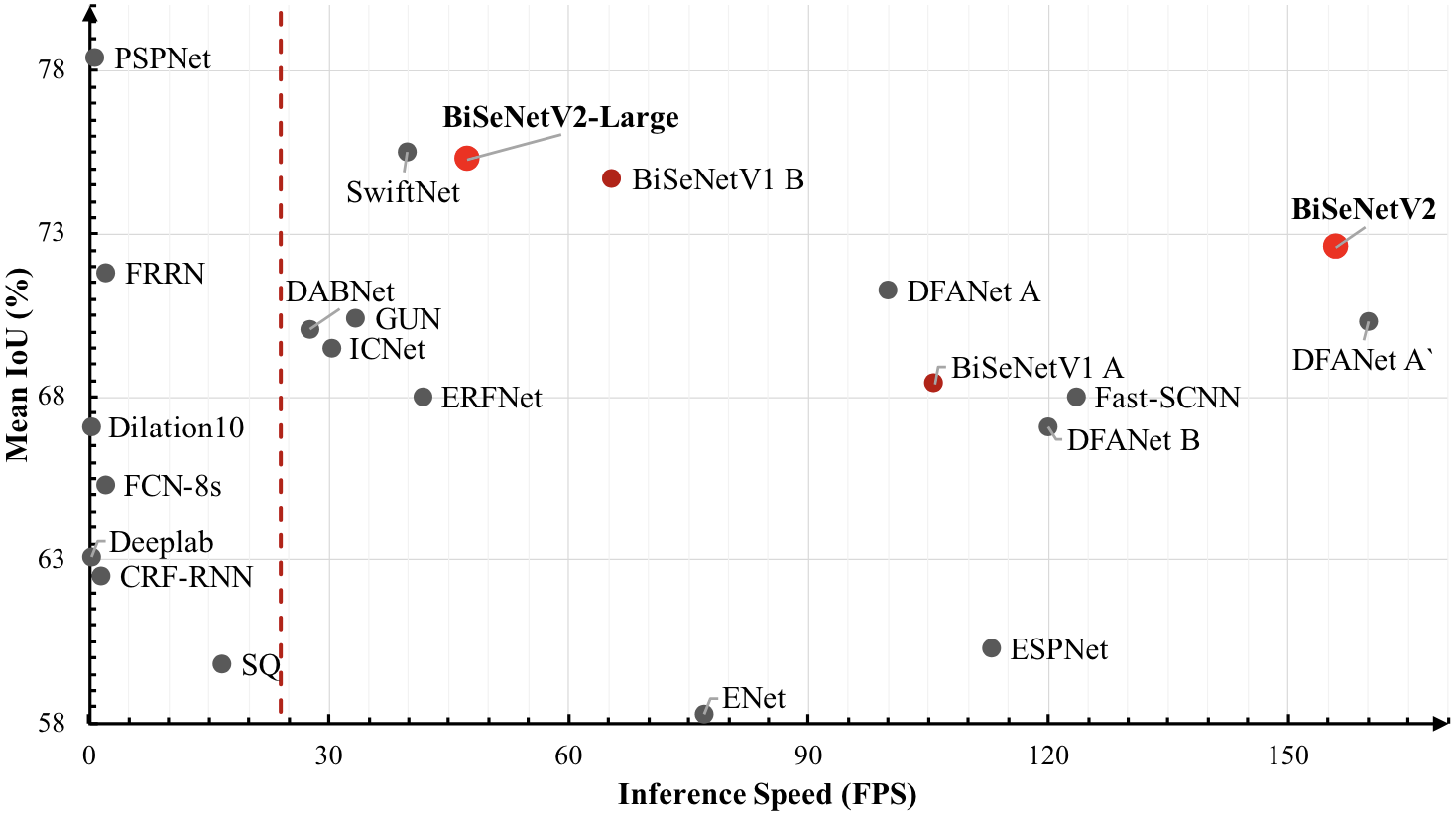

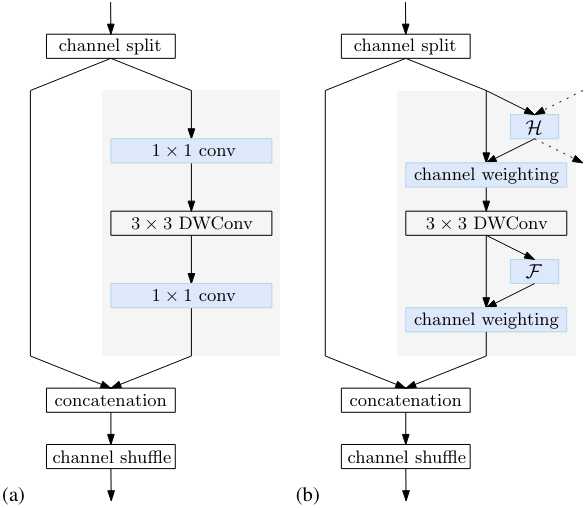

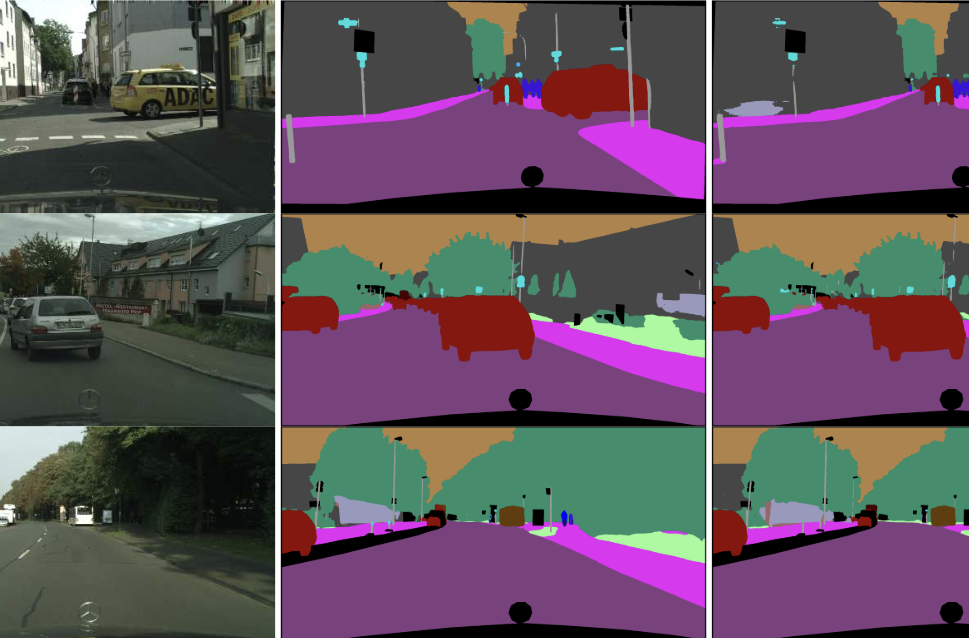

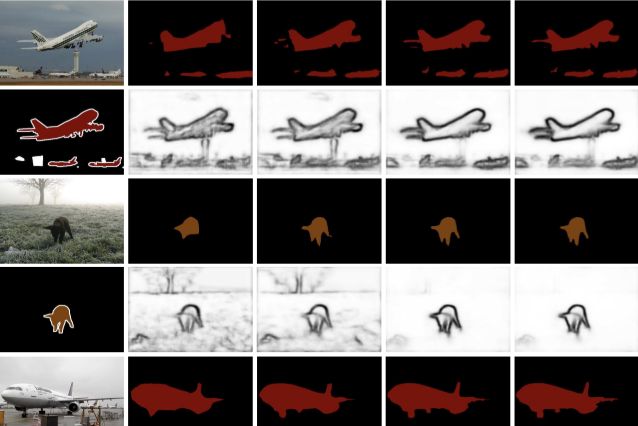

PhD at HUST, focusing on semantic and panoptic segmentation. Won 1st place in the COCO & Mapillary Panoptic Segmentation Challenge 2018 at ECCV. Built TorchSeg, a widely-used PyTorch segmentation codebase (2 000+ GitHub stars). Visiting student at the University of Adelaide. Interned at Microsoft Research Asia (Stars of Tomorrow) and Megvii (Face++) Research.

- VQRAE [paper] — Representation Quantization Autoencoders for Multimodal Understanding, Generation and Reconstruction.

- SkyReels-V1 — Human-Centric Video Foundation Model. 2 700+ ⭐

- SkyReels-A1 [paper] — Expressive Portrait Animation in Video Diffusion Transformers. 500+ ⭐

- LiteHRNet [paper] — A Lightweight High-Resolution Network. 900+ ⭐

- TorchSeg — PyTorch semantic segmentation codebase — BiSeNet, DFN, DenseASPP and more. 1 400+ ⭐

-

2025

Paper VQRAE accepted at CVPR 2026.

-

2025

Paper CoTyle accepted at CVPR 2026.

-

2025

Received the Second Prize in Natural Science of the Science and Technology Award from the Chinese Institute of Electronics (CIE).